NVIDIA is introducing a new class of 3D assets called "SimReady" assets-the building blocks of virtual worlds.

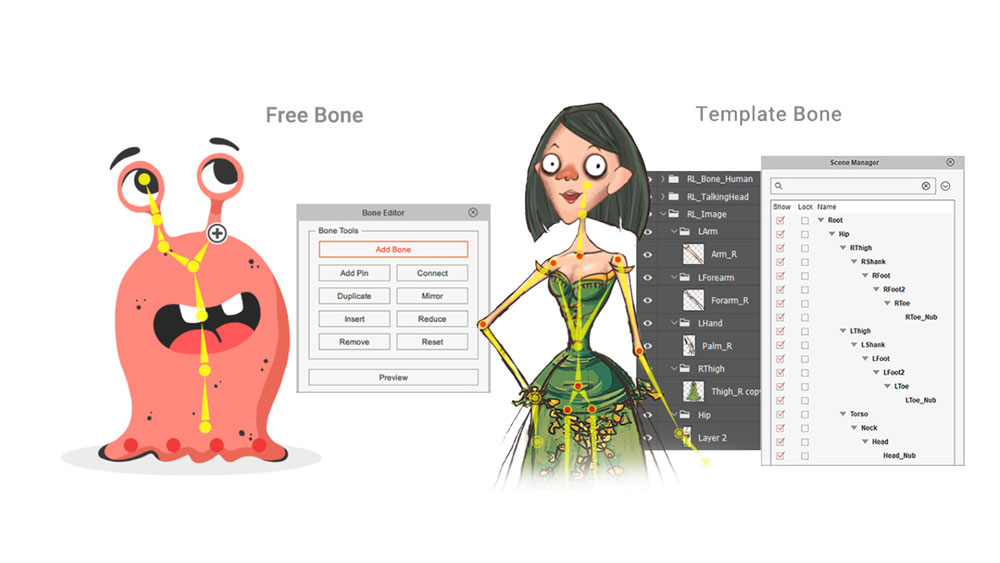

Whether building digital twins or virtual worlds for training and testing autonomous vehicles or robots, 3D assets require many more technical properties, requiring a need to develop and adopt novel processes, techniques, and tools. Creating virtual worlds is hard, and today's existing universe of 3D assets is inadequate, representing just the visual representation of an object. The next wave of industries and AI requires us to build physically accurate virtual worlds indistinguishable from reality. Beau Perschall, Director, Omniverse Sim Data Ops, NVIDIA.Renato Gasoto, Robotics & AI Engineer, NVIDIA.Also, instead of recording your voice performance, you can import an mp3 file and generate lip sync according to it. The one exception is the lip sync (the different mouth shapes changing according to sound), which is generated by your voice in the microphone. That means you blink – your character will blink too. The movement of those is generated by your performance at the webcam. The features that are automatically recognizable are: pupils, eyebrows, eyelids, blinking, face movements, and the different mouth shapes. They now are rigged to correspond to your own facial features. The way it works is simple! If your character artwork in Illustrator or Photoshop is properly set up, when you import it to Character Animator, the program recognizes and automatically tags the facial features of your character. In this way you can change anything on your character (colors, size, whole body parts) and the changes will instantly appear in CA.Ī webcam and a microphone are essential for working with CA because the program uses your face and voice to generate the movement of your character. The way it works is when you import your character in CA, and you make changes on your character’s Illustrator (or Photoshop) file and click save, these changes are automatically adopted by Character Animator.

For now, you need to know that Character Animator works with artwork created in Illustrator and Photoshop only.

How to create a working and properly set up character is a complex process, that is why we will dedicate the following tutorials of these series to it in detail (Links at the bottom of the article). You can use premade puppets, which you can directly import into the software, or you can prepare your own. To open it, click File -> Open Character Animator. That means if you have After Effects you already have Character Animator. Follow this link to learn more about the service or choose a plan according to your needs: Adobe Creative Cloud.Īlthough it can be used as a standalone program, generally Character Animator is part of Adobe After Effects CC 2015 or later. To use the Character Animator app, you will need not only it but also either Adobe Illustrator or Adobe Photoshop in your Creative Cloud plan. To work with Character Animator you need to have the Adobe Creative Cloud – a service that gives you access to different Adobe apps, according to your Creative Cloud plan.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed